AI Agents

Deploy Autonomous AI Agents That Work For You

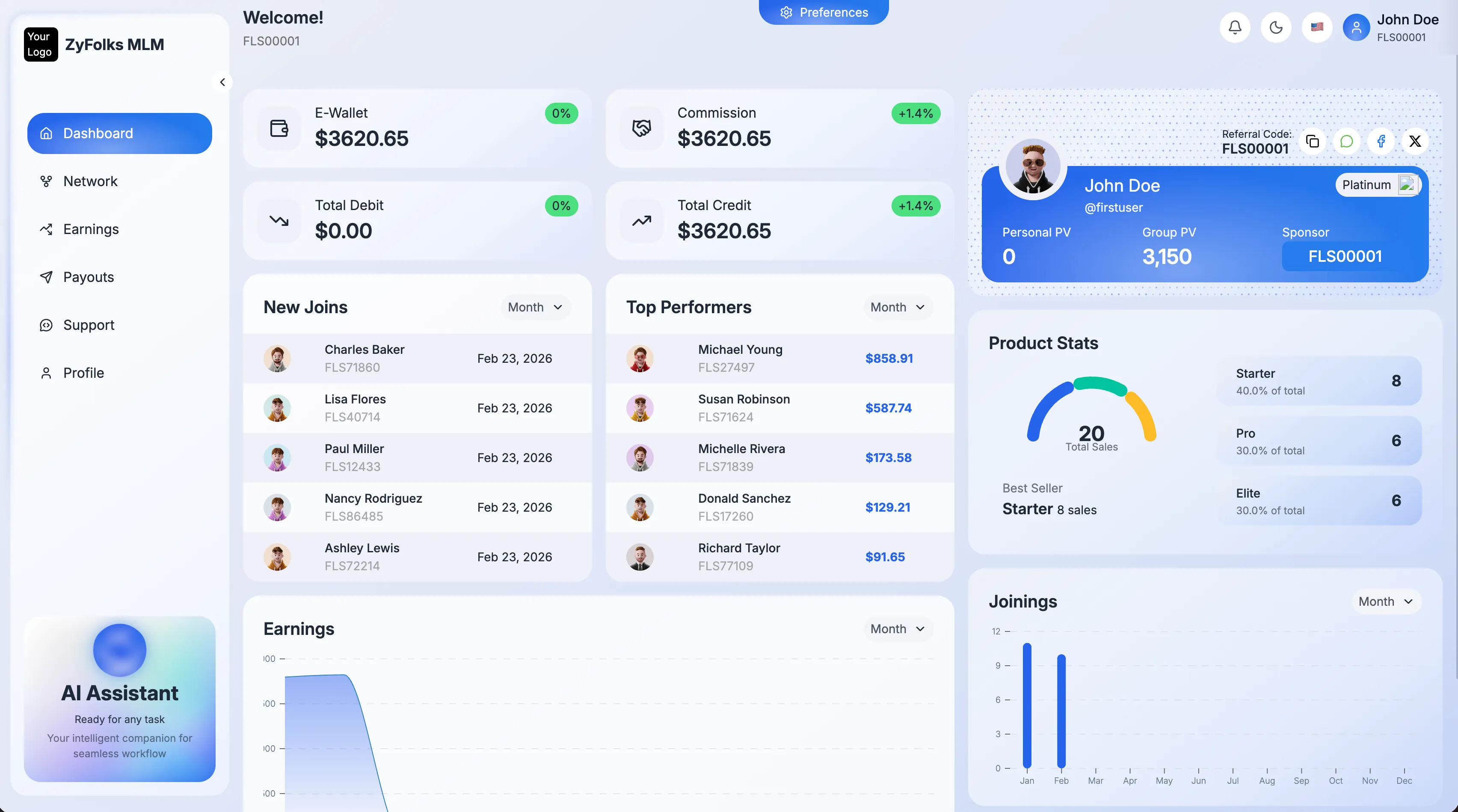

Zyfolks builds custom AI agents that handle work end to end — answering customer questions, qualifying leads, researching prospects, pulling data from internal systems, and surfacing answers from your team's documents. Unlike chatbots that hand off the moment things get hard, our agents carry real context, use tools, and know when to escalate to a human.

Each agent is built for a specific job: support, sales, research, operations, internal search. We design around your data, your tone of voice, and the guardrails you need for compliance, safety, and customer trust.

What Are AI Agents?

An AI agent is an autonomous system that takes a goal, plans the steps to reach it, uses tools (APIs, databases, search, your internal systems) to gather information, and produces a result — all without step-by-step human instructions. Agents hold conversation context, remember prior interactions, and adapt to new information in real time.

Our agents are production-grade: grounded in your data, observable at every step, versioned like code, and safe by design. You decide what they can do, who they can talk to, and when a human has to confirm.

Key Features

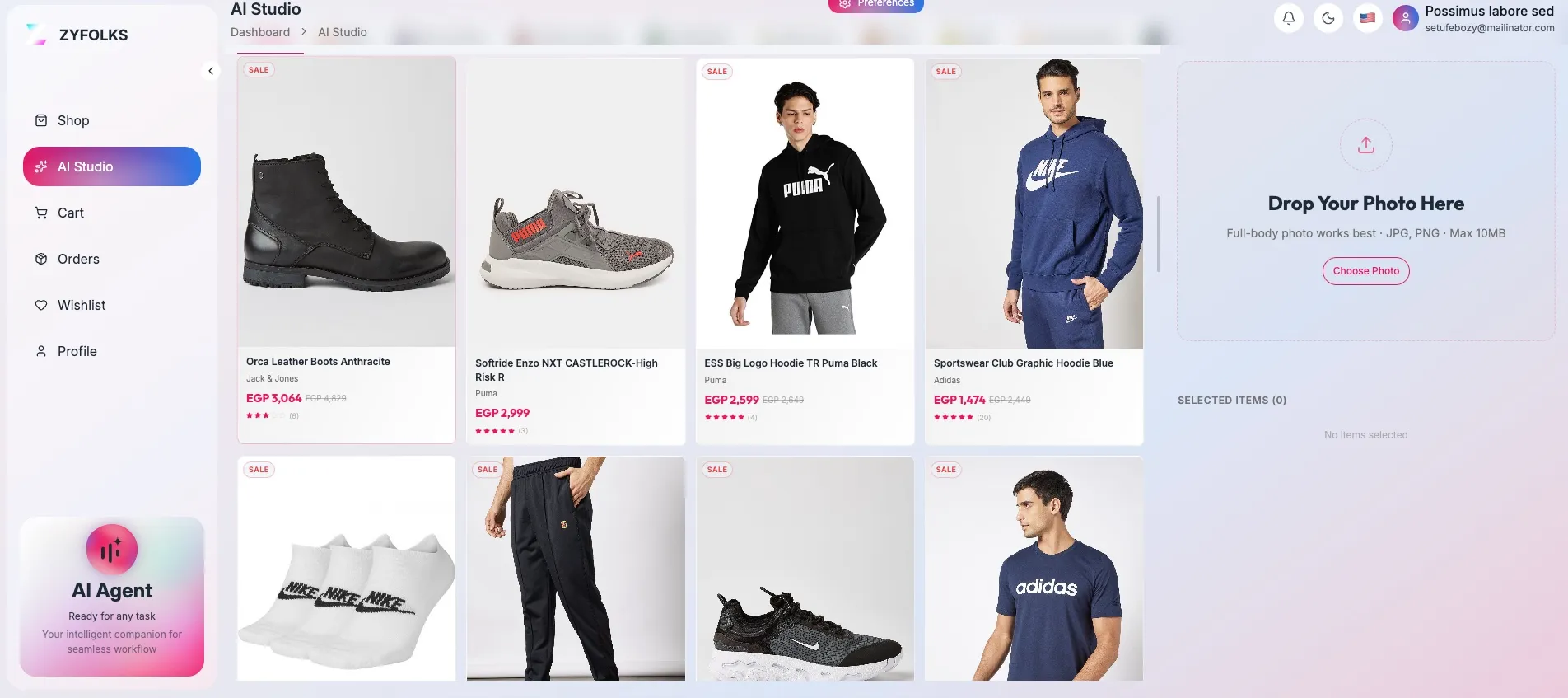

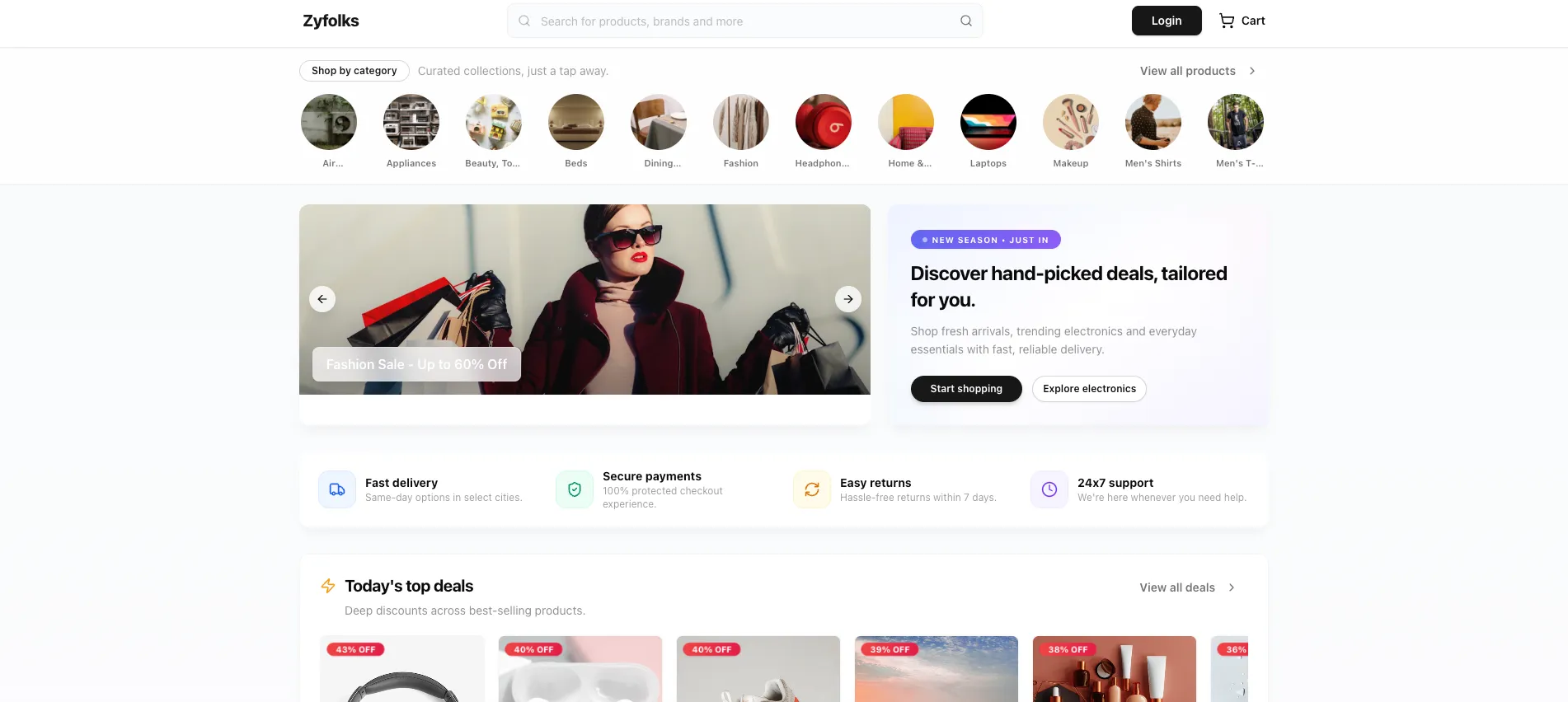

- Conversational Support & Sales Agents: Handle customer questions, qualify leads, and book meetings — across chat, email, and messaging platforms.

- Internal Knowledge Agents (RAG): Retrieval-augmented agents that answer from your docs, wikis, Notion, SharePoint, or internal databases with source citations.

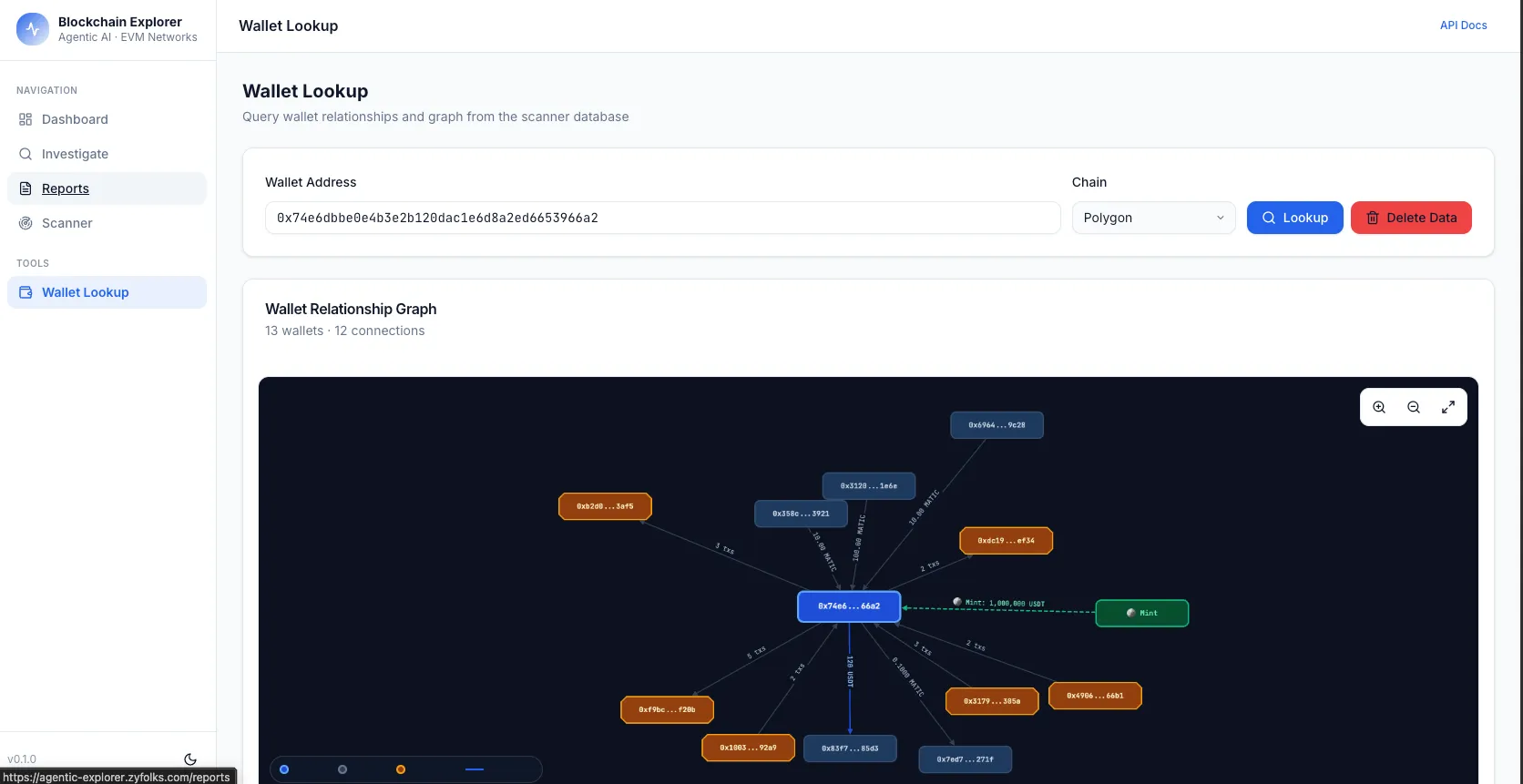

- Research & Data-Gathering Agents: Multi-step research, competitive intelligence, and prospect enrichment delivered as structured reports.

- Sales Qualification & Outreach Agents: Personalized outreach, follow-up sequences, and CRM updates driven by signal — not templates.

- Multi-Agent Orchestration: Specialized agents that collaborate — a planner, a researcher, a writer — to solve complex tasks.

- Tool & API Integration: Agents that call your internal APIs, query databases, send messages, and update systems of record.

- Guardrails, Observability & Human Handoff: Policy enforcement, PII redaction, full tracing, and clean escalation paths when the agent hits its limits.

Frequently Asked

Questions

Common questions about designing, launching, and governing AI agents.

A chatbot answers questions. An AI agent takes actions. Agents use language models to reason through a task, call tools and APIs, read and write data, and loop through steps until the job is done — all with minimal human supervision. Think of an agent as a digital teammate, not a help article.

Typical use cases: customer support that can resolve issues end-to-end (not just answer questions), sales agents that research prospects and draft outreach, internal knowledge agents that answer questions from your docs and ticket history, and research agents that gather, compare, and summarize information from the web and internal sources.

Most production agents run on OpenAI (GPT-4, GPT-4 Turbo) or Anthropic (Claude) because they're reliable at tool use and reasoning. For privacy-heavy workloads we run open-source models (Llama, Mistral) on your infrastructure. We choose per use case — not a default stack.

Agents run inside guardrails: allowlists of tools/APIs they can call, hard caps on spend and time, validation on every output, human-in-the-loop checkpoints for risky actions, and full observability so you can audit every step the agent took and why.

Yes. We build RAG (retrieval-augmented generation) pipelines that index your documents, tickets, wikis, and databases, then give the agent semantic search over that knowledge. The agent only reads the sources you grant it, with full access logging.

A focused single-purpose agent (e.g. support triage, sales research) typically starts at ₹3L–₹8L (roughly \$3.5k–\$10k) for build and deployment, plus ongoing LLM API costs. Complex multi-agent systems with custom tools run much higher. See our AI chatbot cost guide for detailed tiers.