AI Agents vs AI Automation: Which Do You Actually Need?

Every week we hear the same question: "Do we need an AI agent or just an automation?" They sound similar and the vendor landscape happily blurs the line — but the difference matters a lot once you start building, because they have very different cost profiles, failure modes, and engineering patterns.

TL;DR

Automation executes pre-defined workflows fast and cheap — right for predictable, repetitive tasks. Agents reason, call tools, and make judgement calls — right when each task is different and needs understanding, not just execution. Most mature systems combine both: an agent orchestrates, automations do the heavy lifting.

What AI Automation Actually Does

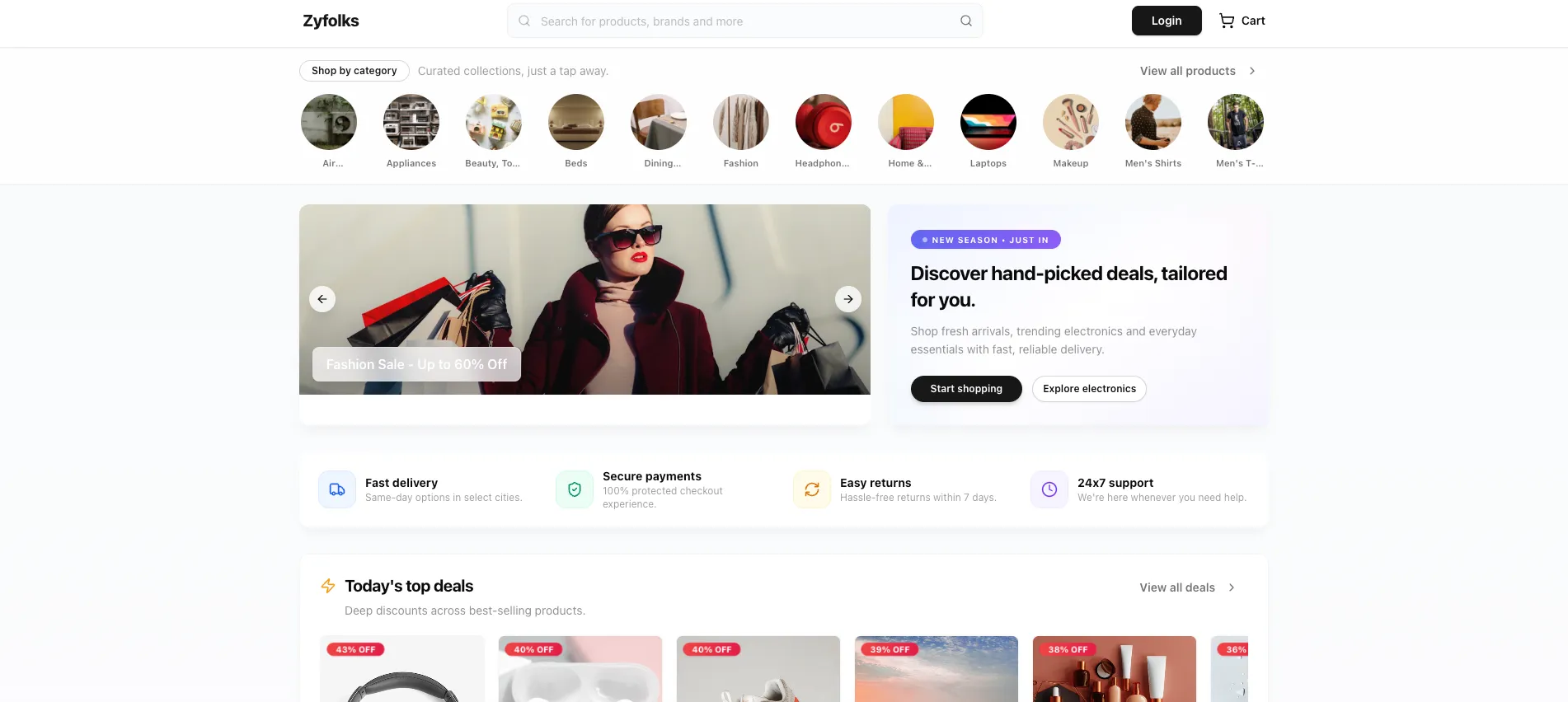

AI automation is the evolution of tools like Zapier, n8n, or traditional RPA — but instead of only following rigid if-then rules, it uses language models to read unstructured input (emails, PDFs, chat logs, screenshots) and make smarter routing decisions. The workflow itself is still pre-defined; the AI just handles the fuzzy bits.

Typical patterns: parse inbound emails and route them, extract structured data from invoices, enrich CRM records from LinkedIn, summarize meeting transcripts, or auto-tag support tickets. We cover this in depth on our AI Automation page.

What AI Agents Actually Do

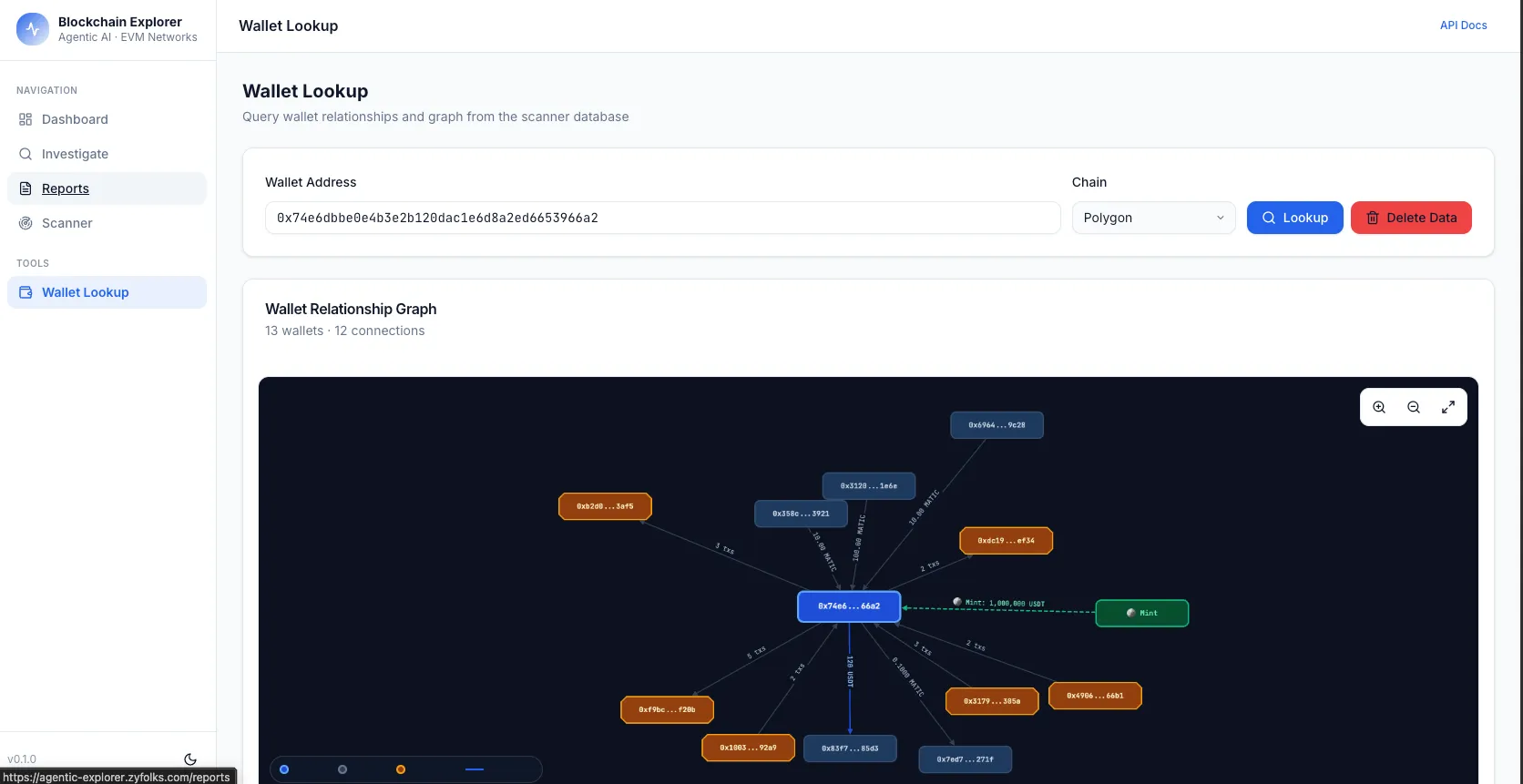

Agents are software that reasons. Given a goal, an agent figures out the steps, calls tools (APIs, search, your internal data, other agents), reads the results, and loops until the task is complete. No pre-defined workflow — the agent builds its own path per task.

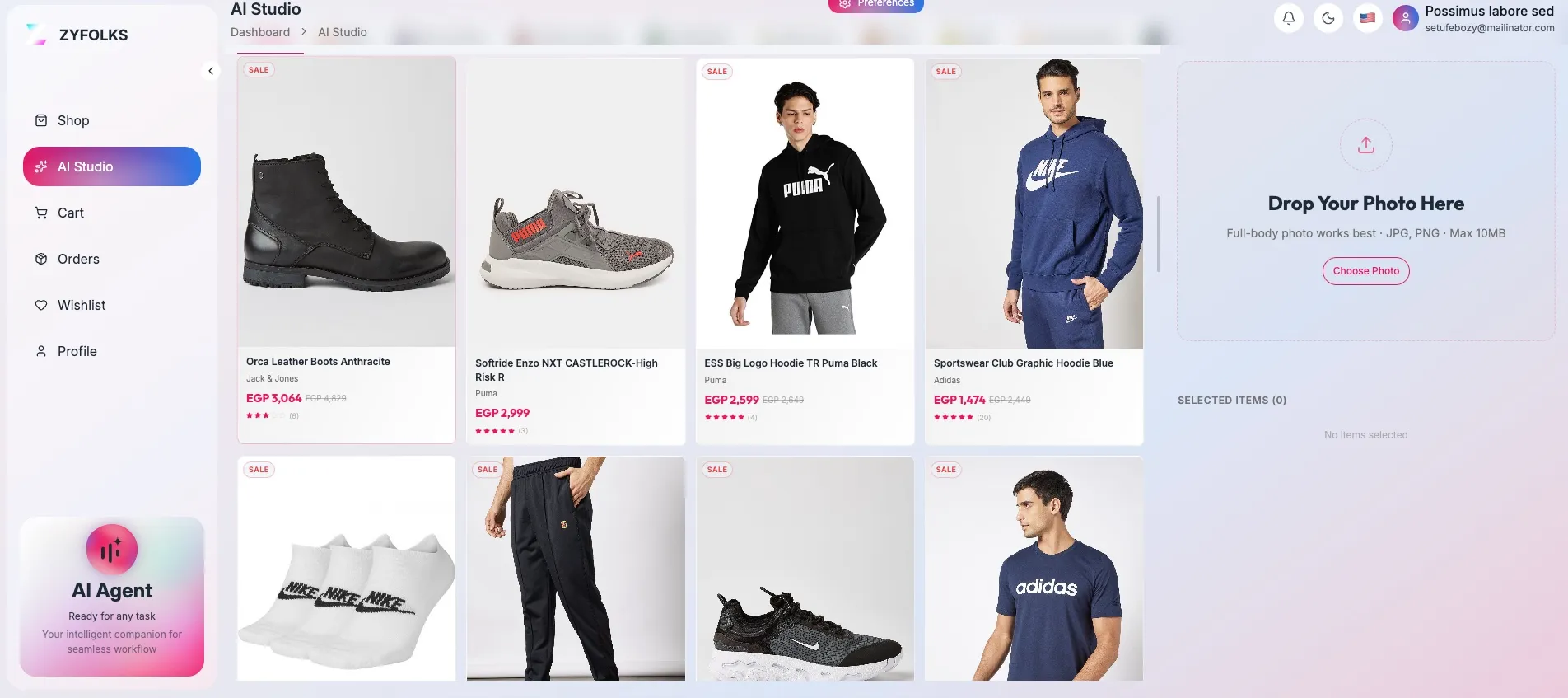

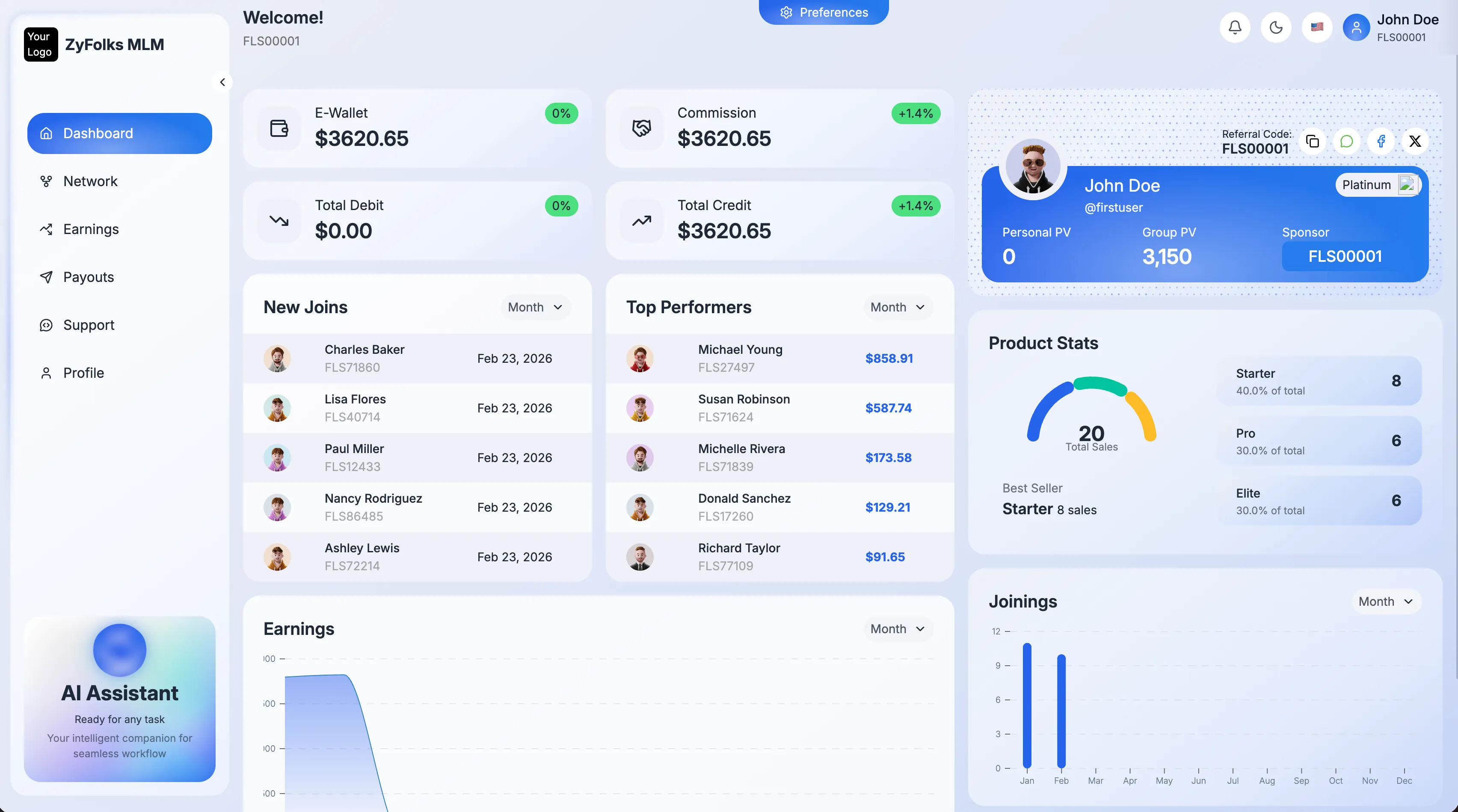

Typical patterns: resolve customer support tickets end-to-end (not just answer FAQs), research prospects and draft tailored outreach, act as an internal knowledge assistant with semantic search over your docs, or run multi-step research for analysts. See our AI Agents service for common production setups.

Side-by-Side Comparison

| Criteria | AI Automation | AI Agents |

|---|---|---|

| Best for | Predictable, repetitive tasks | Variable, judgement-heavy tasks |

| Decision logic | Pre-defined workflow with AI-powered steps | LLM reasons dynamically each run |

| Cost per run | Low and predictable (cents) | Higher and variable (cents to dollars) |

| Setup time | 2–4 weeks per workflow | 4–10 weeks per agent |

| Failure mode | Known edge cases error out cleanly | Can hallucinate or loop — needs guardrails |

| Observability | Step logs, easy to audit | Reasoning traces, harder to audit |

| Best tools | n8n, Make, Zapier, custom Python/Node | LangGraph, OpenAI Agents SDK, Claude Agent SDK, custom |

When to Choose AI Automation

Pick automation when the task is well-defined, happens often, and the same steps work every time. Examples: routing inbound emails based on content, extracting line items from invoices, generating weekly reports from database queries, syncing contacts between CRM and email, auto-replying to common support FAQs.

If you find yourself writing the steps the system should take before it takes them, you want automation. It's cheaper to run, faster to build, and easier to test.

When to Choose AI Agents

Pick agents when each task is different enough that a fixed workflow can't cover all the cases, and the AI needs to decide what to do based on what it finds. Examples: customer support that resolves issues end-to-end (different per user), sales research where the agent decides which sources to check, internal knowledge bases where users ask novel questions, or multi-step data analysis where the conclusion depends on intermediate results.

Agents absorb ambiguity at a cost — higher LLM spend, more surface area for things to go wrong, and a harder time proving to auditors what happened in a given run. If you need those tradeoffs, agents are worth the complexity. If you don't, you're paying for flexibility you won't use.

How Zyfolks Approaches This

For most clients we start with AI automation on the two or three workflows that have the highest volume and the clearest process. We ship those in 4–6 weeks, measure the hours saved, and use that momentum to fund the riskier agent work.

Then, for workflows where humans were already making judgement calls (support, sales research, knowledge-intensive tasks), we layer AI agents on top. The agents call the automations as tools — the automations do the repetitive mechanical work, the agent reasons about what to do. That split keeps costs sane and observability sharp.

Related reading: our cost guide for AI chatbots covers budget tiers for agent projects specifically.

Frequently Asked

Questions

Common questions about choosing between AI agents and AI automation.

Not entirely. Automation is still the right fit for repetitive, well-defined workflows with clear inputs and outputs. Agents shine when tasks require judgement, tool use, or reasoning across multiple steps. Many production systems use both: an agent orchestrates, automations execute.

Automation is usually cheaper per execution — it's predictable, often rule-based, and doesn't call an LLM on every step. Agents cost more because they burn LLM tokens reasoning through tasks. Budget-wise, automation scales linearly; agents can get expensive if you run them at high volume without cost caps.

Start with the lowest-risk, highest-volume task. If it's predictable (same shape of input, same steps every time), automate. If the decision changes based on content (unstructured email, variable customer intent, research across sources), an agent is the right tool.

Yes, and we recommend it for anything high-stakes. In automation, human review is a workflow step. In agents, it's a tool the agent can call — 'escalate to human' — typically triggered by confidence thresholds or matching a policy rule.

Still unsure which path fits your workflow?

Send us the workflow, its volume, and who owns it today. We'll tell you honestly whether automation, an agent, or a hybrid makes sense — and give you a rough cost + timeline before you commit.